Explainable Personalization AI for Health and Well-Being

PROJECT OVERVIEW

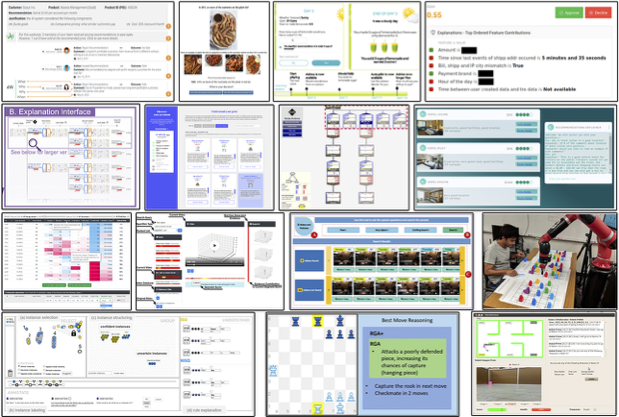

The rapid technical development of Explainable AI (XAI) has yet to yield significant success in real-world applications due to the low usability and efficacy. This project investigates the notions of transparency and interpretability in AI and human-centered AI explanations in well-being technologies. This project is animated by questions such as how can explanations, instead of explanability, assist non-expert users to understand, inspect, and collaborate with these AI products to improve their health and Well-being? In LLM mental well-being products, can AI explanations kindle users’ self-reflection and introduce different kinds of human-AI relations?

SPONSOR

TEAM MEMBERS

PUBLICATIONS

“The Centers and Margins of Modeling Humans in Well-being Technologies: A Decentering Approach”.

Zhu, J., Sanches, P., Tsaknaki, V., van der Maden, W., & Kaklopoulou, I.

In ACM PACM on Human Computer Interaction, CHI 2025.

“Can Games Be AI Explanations? An Exploratory Study of Simulation Games”.

Villareale, J., Fox, T. and Zhu, J.

In Conference Proceedings of DiGRA 2024 Conference: Playgrounds (2024, September).

“Slide to Explore ‘What If’: An Analysis of Explainable Interfaces”.

Zhu, J., Meyer, L.S.

Adjunct Proceedings of the 2024 Nordic Conference on Human-Computer Interaction, 2024.

J. Villareale, C. Harteveld, and J. Zhu.

In ACM PACM on Human Computer Interaction CHI Play, 2022.

“How Human-Centered Explainable AI Interfaces are Designed and Evaluated: A Systematic Survey”.

T. Nguyen, A. Canossa, J. Zhu.

arXiv preprint arXiv:2403.14496.

J. Zhu, D.H. Dallal, R.C. Gray, J. Villareale, S. Ontañón, E.M. Forman, D. Arigo.

Proceedings of the ACM on Human-Computer Interaction 5 (CSCW), 1-21, 2021.